You Gotta Study Traditional Media to Learn about the Internet

Social commentary about the internet often has a blindspot for traditional media. Many believe that the internet exacerbates many issues that currently plague American society, particularly misinformation and political polarization. Implicit in these claims is a posited correlation between internet usage and these negative social outcomes. However, the evidentiary support for that correlation is very often flawed. Usually, they fixate on the internet and lose sight of the broader media landscape.

In assessing, for example, whether Facebook makes its users more misinformed or polarized, analysts must compare the platform with other sources of community and information about politics. No serious researcher can deny the role traditional news media, radio, and cable TV have played in deepening America’s social divisions. It’s time this reality was reflected in research and analysis about the internet’s consequences for society.

I think this is particularly true for criticism of Facebook. The company has often been at the center of arguments that the internet has been harmful for democracy. For example, journalists at the Wall Street Journal exposed decisions made by senior leadership at Facebook, including CEO Mark Zuckerberg, to shutter initiatives and research efforts aimed at reducing the platform’s divisiveness. The article implicitly supposes a correlation between usage of Facebook and greater misinformation and extremism among its users than people in other information environments.

In particular, they report that Monica Lee, a researcher and sociologist at Facebook, presented evidence to Zuckerberg that

‘64% of all extremist group joins are due to our recommendation tools’ and that most of the activity came from the platform’s ‘Groups You Should Join’ and ‘Discover’ algorithms: ‘Our recommendation systems grow the problem’.

This statistic has been repeated by a number of commentators and professional news publications, including the Hindustan Times’s technology section. Because Facebook very rarely shares user data with outside researchers and journalists, it represents one of the few quantitative estimates available supporting the claim that Facebook usage is correlated with negative social outcomes.

As such, understanding the flaws in this (implicit) claim of a correlation by Lee can help clarify the problems that exist in analysis of the internet more generally. I argue that Monica Lee’s estimate is fraught with problems because she--and the reporters who uncritically accept her estimation--selects on both the dependent and independent variables. The evidence they offer is insufficient basis for a correlation because it lacks crucial variation in the variables undergoing comparison.

This is a classic mistake of social science research. To demonstrate why variation is so crucial I’ll offer an illustrative example. In their book Thinking Clearly with Data, Professors Ethan Bueno de Mesquita and Anthony Fowler describe how to find whether there is a correlation between countries being an autocracy and a major exporter of oil.

It would be a mistake to simply see what proportion of major oil exporters are autocracies because you don’t know how many non-exporters are also autocracies. Instead, a better approach is comparing the proportion of autocracies between these two different groups: those that are and are not major exporters of oil. If the proportion of autocracies was strongly higher or lower in the first compared with the second group, then there is support for a correlation between the two variables.

Importantly, Monica Lee’s statistic lacks this variation because she only reports a proportion for extremist Facebook groups. She makes the same mistake as someone looking only at autocracies in the question above. She is not comparing two different groups.

This problem causes a lot of confusion because while 64% seems like a high number, we don’t know whether more or less than 64% of users join non-extremist groups because of recommendations by Facebook's algorithms. In addition, the Wall Street Journal doesn’t give us any information about how Lee defined her variables. Let me make a two-way table to clarify what exactly we don’t know.

Clearly we can say that whether users join extremist groups represents the dependent variable because that’s the outcome Monica Lee is trying to understand. The scope of her focus is defined by extremist groups, and that’s what I mean when I say she “selects” on the dependent variable.

This is problematic because I think it’s intuitive that a high proportion of, say, liberal news junkies join the NPR politics podcast group because of Facebook’s recommendations. Who’s to say that the Groups You Should Join and Discover algorithms didn’t invite substantially more than 64% of members to the NPR podcast group?

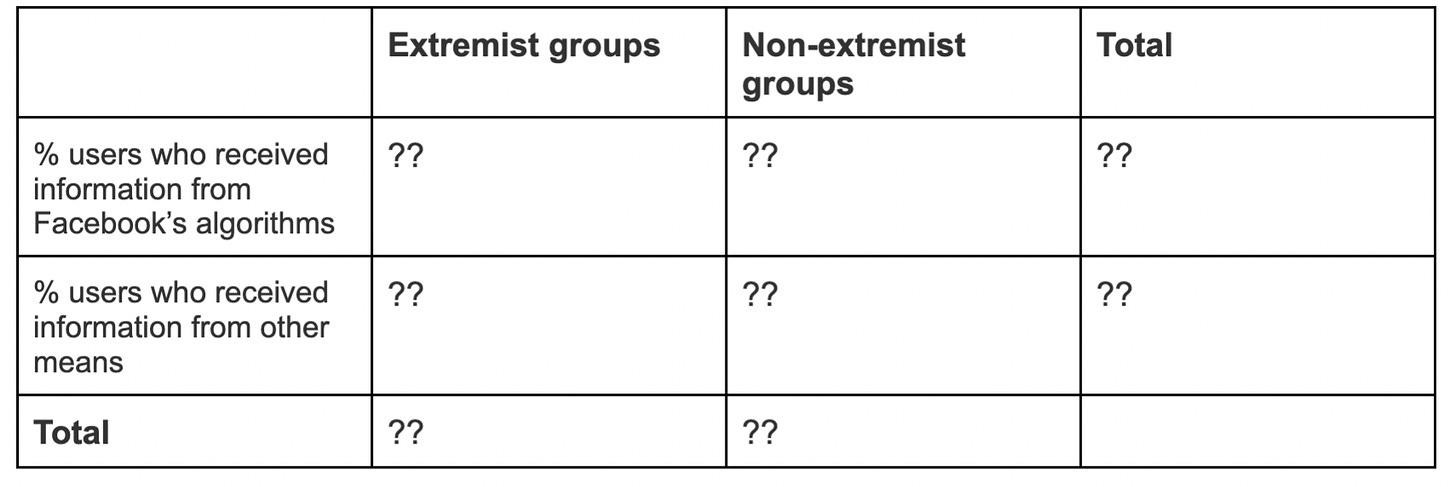

If Monica Lee did want to assess the correlation between Facebook’s group recommendations and joining extremist groups, she would have to more clearly define her variables and collect data about non-extremist groups and other ways to join social groups as well.

Comparing a well-defined set of “extremist” versus “non-extremist” groups would add crucial variation to the study, and comparing Facebook’s recommendations with other ways people learn how to join social groups would do the same. (In some sense, a recommendation is simply easy information about joining a group online.) I outline these variables within the two-way table below.

I think this case communicates a broader lesson that analysts should learn: when studying the internet, compare across media. It doesn’t make much sense to lambast Facebook for polarizing politics while ignoring the (potentially) toxic role of cable news, talk radio, and blogs.

These groups also probably feature information about extremist groups, which lets people inclined to join those groups know where to find them. For the purposes of this study, a blog post about an extremist gun group’s activities would be no different than a recommendation to join that same group on Facebook.

Of course, collecting data across these various media and groups is expensive and exceptionally difficult, especially since social media platforms are unwilling to make data available for researchers.

However, research difficulty is no excuse for selecting on the independent or dependent variable. Fixating on the internet at the expense of other important media leads to flawed decision-making and a disproportionate focus on social media platforms like Twitter and Facebook.

Researchers have been able to collect data about a variety of media to compare online versus traditional media to understand how the overall communications landscape affects news consumption and politics.

Academics Richard Fletcher and Rasmus Nielsen employed survey data from the 2016 Reuters Institute Digital News Report to assess the correlation between online news sources and the extent of audience fragmentation across several Western countries, including the United States.

Contrary to conventional wisdom, they found that greater choice among a variety of news sources was associated with higher overlap in audiences because people tend to simultaneously consume news from niche outlets and the more popular sources that they share with others. This study benefits from variation in its independent variable, the different types of media where people get their news, to unearth something unexpected about the internet.

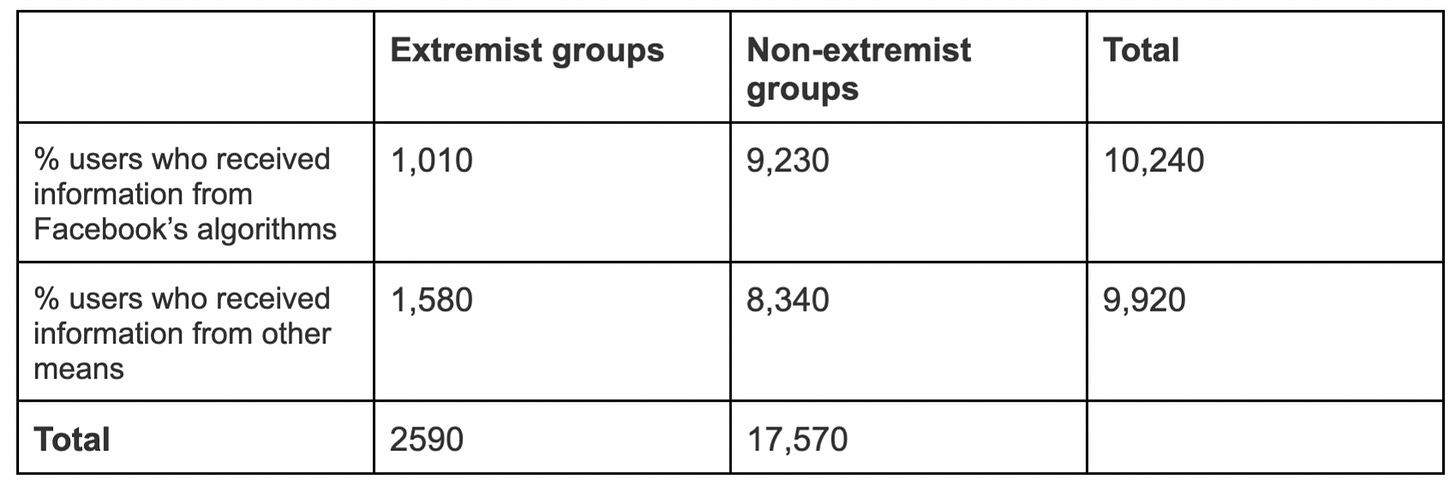

If Monica Lee had had more resources at her disposal, she might have done something similar. Perhaps she could have surveyed a random sample of 10,000 Americans and asked them whether they got information about how to join groups from the various media they use. Of course, those surveyed might not be willing to admit their affiliation with extremist groups, but I’m ignoring this problem for the sake of demonstrating my broader point. Studying non-internet media is crucial for establishing correlations about the internet itself.

Below I’ve filled in some hypothetical proportions to the table I created above.

Note: these numbers have absolutely no basis in evidence, but I offer them as not completely implausible estimates for the proportion of survey respondents in Lee’s hypothetical research design who would report receiving information about groups from the algorithm or other media like blogs, talk radio, cable TV, and other digital media.

Supposing the validity of these data, Lee could have established whether there is a correlation between Facebook’s algorithm and receiving information about joining extremist groups.

If the proportion of respondents who received such information from Facebook’s algorithms (the top left quadrant divided by the first row’s total) was higher than the proportion who received the same information from the other media (the bottom left quadrant divided by the second row’s total), a positive correlation would be supported. If the opposite were the case, then there would be a negative correlation. If the proportions were somewhat close, there would be no correlation at all.

I make the first proportion (.10) less than the second (.16) to further demonstrate my point. In this case, Facebook’s algorithm would be negative, perhaps because the algorithm has been trained to know that extremist groups are unlikely to be popular with most users.

Though contrary to prevailing views of social media, I don’t think it’s completely unreasonable to believe that an algorithm might have a finer-grained sense of which groups users would want to join than, say, a talk radio host or a blogger. It’s unclear to me that more personalized recommendations, everything else being equal, necessarily lead to more information about joining extremist groups than non-internet media, especially in light of the findings by Fletcher and Nielsen.

This uncertainty is why we need empirical investigation of the internet! And why that research needs to focus less on new media, especially the platforms!

Analysts and commentators must cease their fixation on the internet and consider the broader media landscape. The Wall Street Journal made this mistake in their reporting of Monica Lee’s findings. The outsized focus on social media continues to be unwise and overlooks other drivers of negative social outcomes. Facebook and Twitter are hardly the only sources of misinformation and polarization in the United States. When it comes to the internet, we all need to stop selecting on the independent variable. That way we can find out where the problems truly lie.